NerDS Lab

The Neural Data Science (NerDS) Lab develops machine learning methods for understanding neural activity, behavior, and biological systems. We build foundation models and representation learning approaches that decode neural activity, model neural and behavioral dynamics, and identify shared structure across animals, brain regions, and recording modalities.

Our research spans neural foundation models, self-supervised learning, neural forecasting, graph representation learning, and multimodal models that connect neural activity, behavior, and biological structure.

Research Areas

|

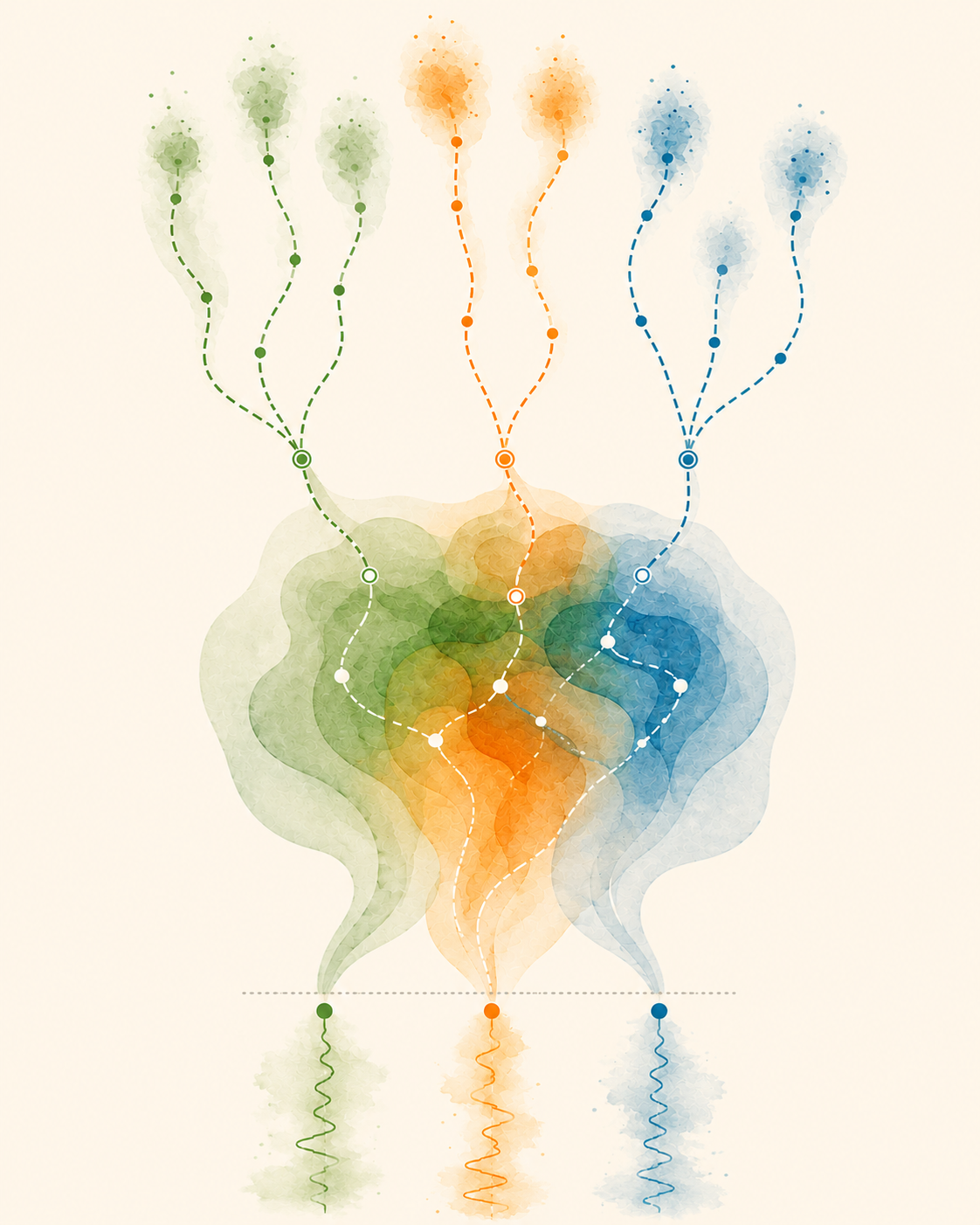

Neural Foundation ModelsWe develop large-scale foundation models for neural activity that learn transferable representations across animals, brain regions, recording modalities, and behavioral tasks. Our work studies how large-scale pretraining enables zero-shot decoding, neural forecasting, and the discovery of shared computational principles across neural systems.

Featured Papers:

|

|

Neuron Identity and Brain OrganizationWe build machine learning systems that reveal structure in large-scale neural recordings, including representations of neuron identity, brain regions, cell types, and population dynamics. Our models learn from neural activity directly and generalize across animals, sessions, and experimental conditions.

Featured Papers:

|

|

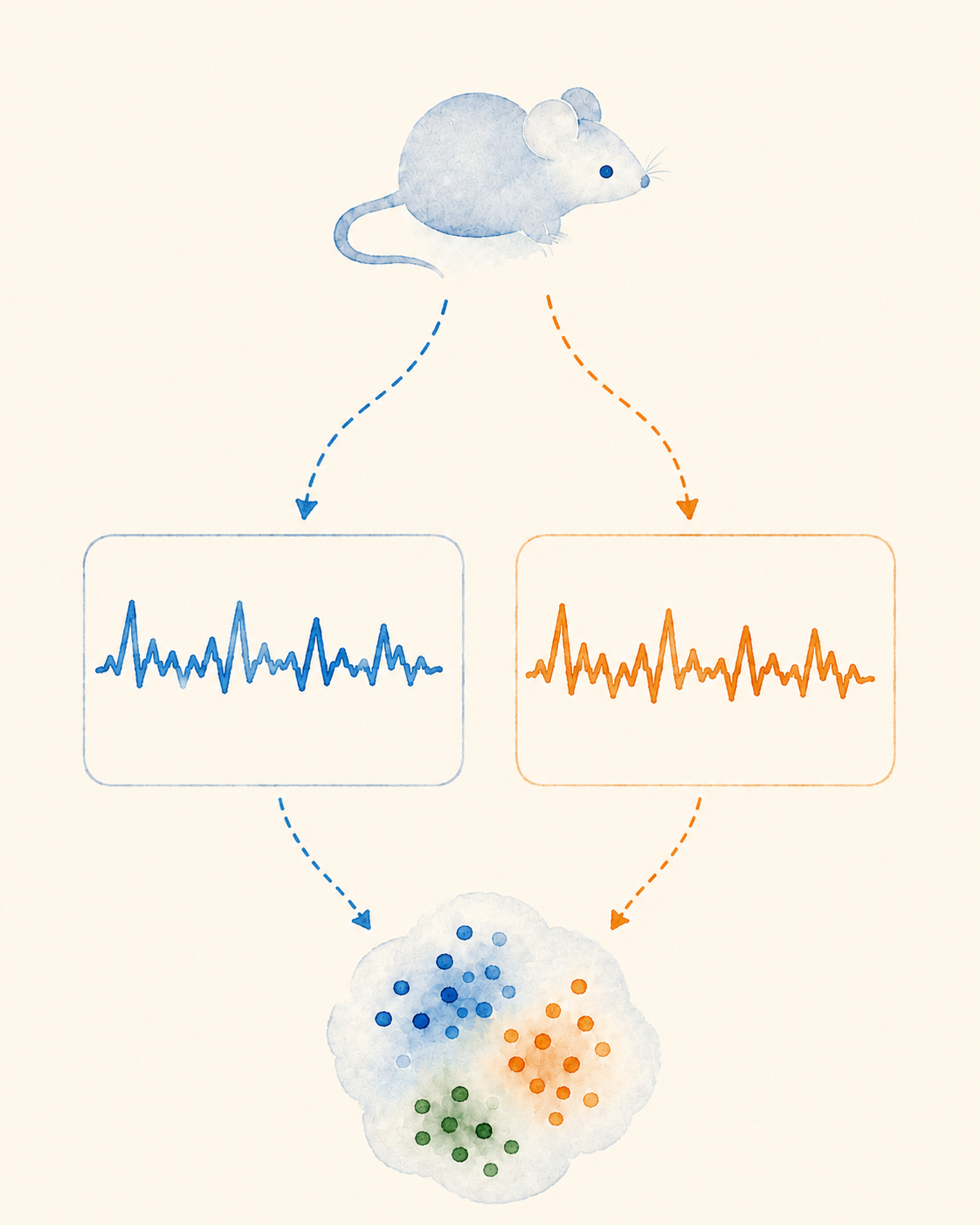

Self-Supervised and Contrastive LearningWe develop self-supervised and contrastive learning methods that uncover structure in neural, behavioral, and biological data without requiring labels. Our work focuses on multiscale representation learning, event-driven objectives, and transfer across datasets, tasks, and modalities. |

|

Neural Forecasting and Generative ModelingWe develop forecasting and generative models that predict neural and behavioral dynamics across multiple timescales. Our work studies how structured temporal representations, event-driven learning, and multiscale dynamics improve forecasting, simulation, and representation learning in biological systems. |

|

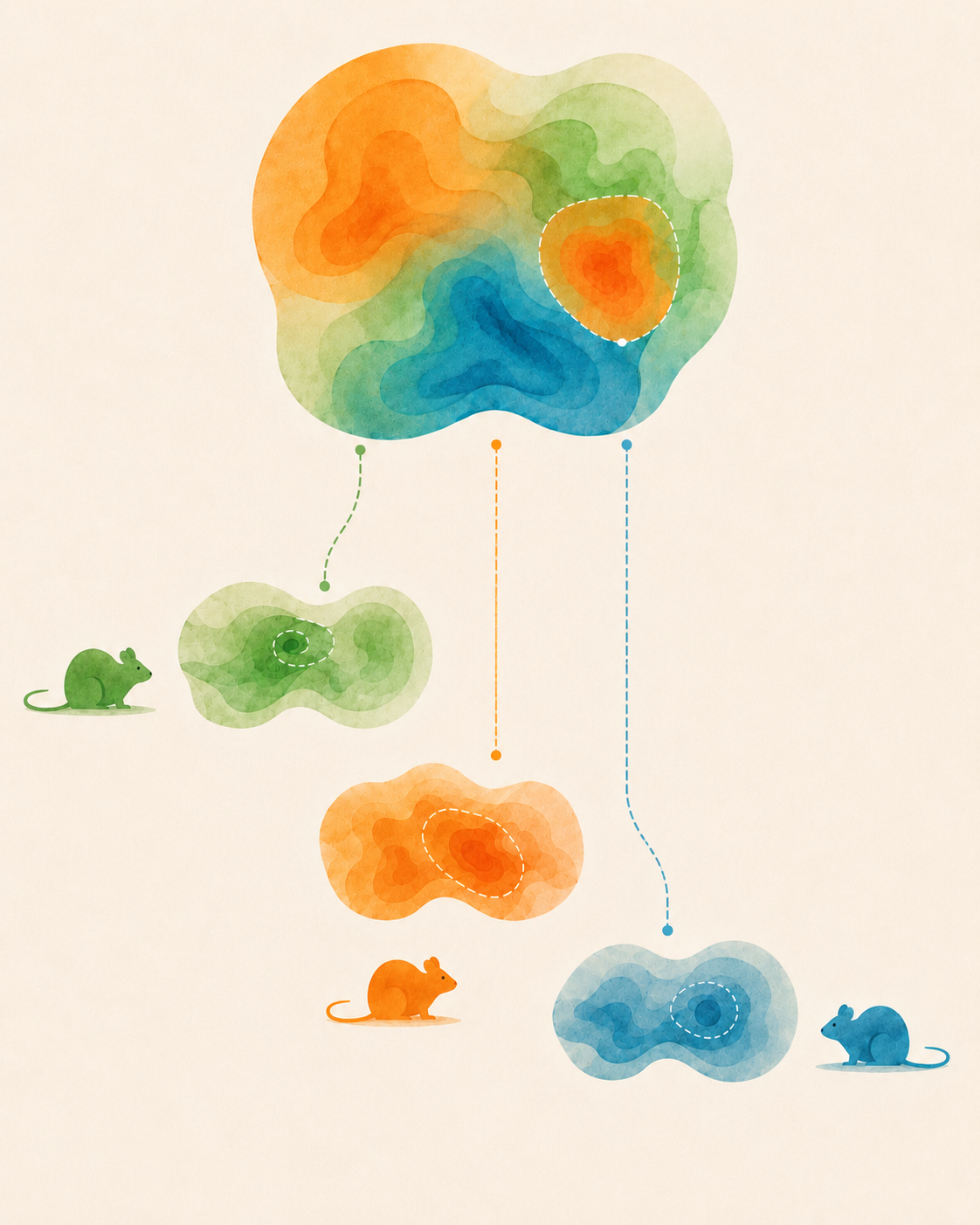

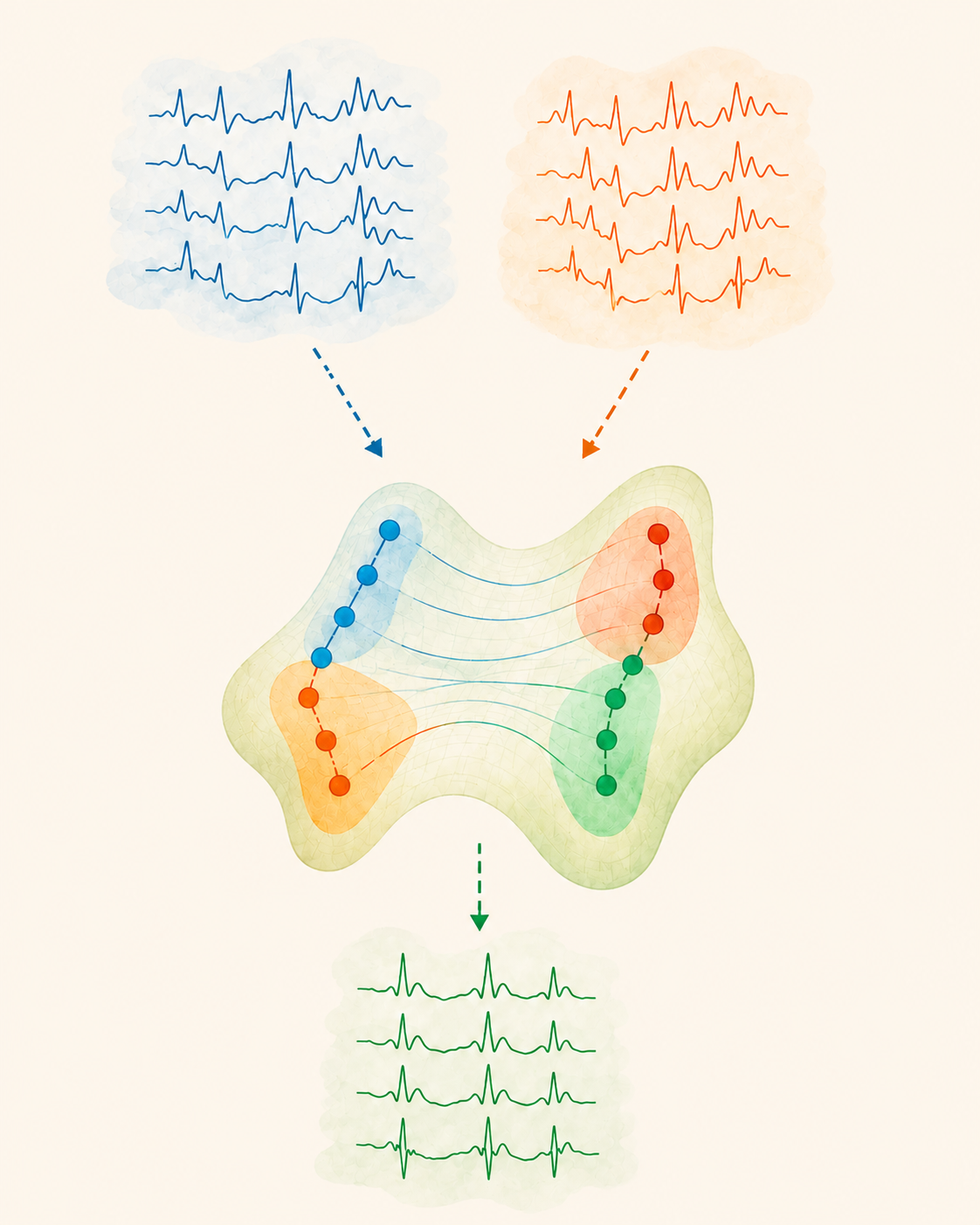

Domain Adaptation and Representation AlignmentWe design representation alignment methods that adapt across subjects, devices, datasets, and recording conditions. Our work uses optimal transport, distribution alignment, and domain generalization to build models that remain robust under real-world shifts in neural, behavioral, and physiological time series.

Featured Papers:

|

|

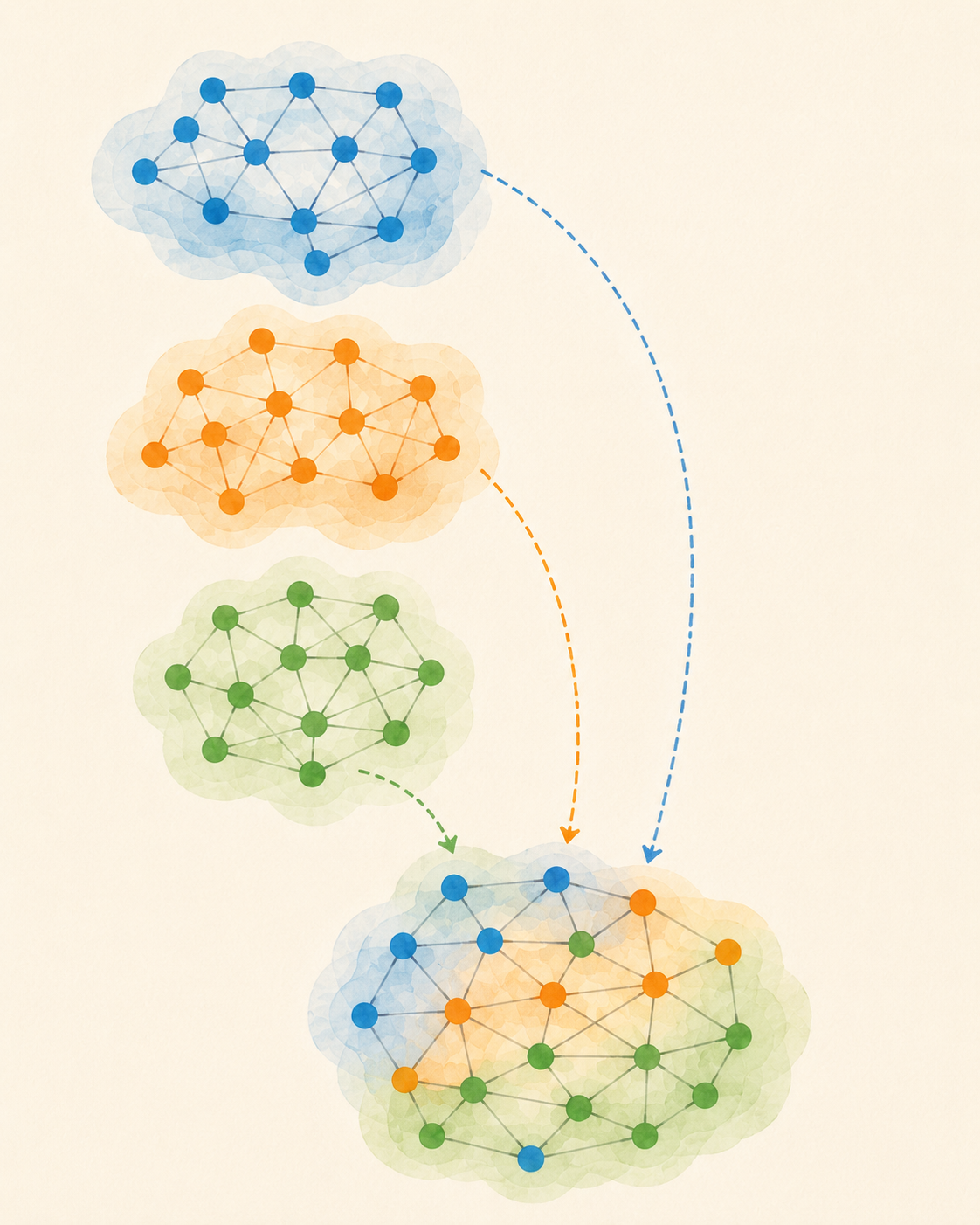

Graph LearningWe develop graph transformers, relational learning methods, and graph foundation models that learn transferable representations across diverse graph domains. Our work studies scalable graph pretraining, multimodal relational learning, and representation transfer across heterogeneous graph structures.

Featured Papers:

|

Funding

We are fortunate to receive funding from the following sources: